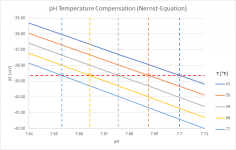

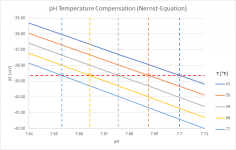

I played around a little with the Nernst Equation, which is basically behind the temperature compensation of a pH-meter (an "ideal" pH-meter when taking the Nernst Equation 1:1). Here is a graph showing the results for temperatures between 41°F and 77°F:

It shows that the same raw-data (i.e. the potential difference measured by the meter in mV) gets corrected to pH-values that are about 0.05 pH-units apart. Not very much...

Then I did some testing in my pool which currently sits at about 22°C (or about 71.5°F). First I made sure that my pH-meter (while still in its cap with storage solution) also showed a temperature of pretty much 22°C. Then I put it directly into the pool without prior rinsing so that the temperature wouldn't change and moved it a bit around so that the storage solution got flushed out. In the first 10-30 sec, the pH-value changed about 0.02 pH-units and then stayed rock stable there for minutes. Based on that, I would rule out effects like CYA-drifts (my CYA is at 80ppm, maybe slightly less after I had to drain some water after the last rain), at least on that time scale of a few minutes. And the actual potential difference measurement seems to be stable after about 10-30 sec if there are no temperature effects to be considered.

Then I rinsed the meter, put it back into the storage solution and warmed it in my hands up to about 30°C (86°F). If temperature compensation based on Nernst was the only effect, I would expect a pH of about 0.02 lower compared to the first test when putting the meter like that back into the 22°C warm pool water (some might call that cold, especially my wife...). But the instant reading was about 0.13 pH-units lower. It then took a couple of minutes for the temperature measured with the Apera to drop down and the measured pH-value to rise back to the same values as in the first test.

I suspect that the main effect is that especially the internal reference cell has to thermalize to the pool water temperature, and that the water layer directly around the measurement cell also might get affected by the initial temperature of the pH-meter. So, there is not just a temperature compensation effect with a generally constant voltage-measurement happening, but also a slow drift of the voltage-measurement because of pH-changes because of different temperatures in or around the measurement cells. While the pH was drifting during my second test, I sometimes changed the measurement mode to "mV" and actually did see the mV-value drifting as well, so it is not just an effect of a wrong temperature measurement.

On a practical scale, these effects are usually still quite small, especially during the pool season. In above example with a difference of 8°C (14.5°F), the initial measurement error of about 0.13 pH-units shrank to maybe 0.05 after one minute, which should be sufficiently accurate for pool maintenance.

In winter, the effects can be larger, as far as I remember from our last winter down under, the drift after sticking a room temperature pH-meter into cold pool water (maybe 50°F) might have been about 0.2 pH-units (maybe more, didn't pay that much attention to the initial reading).

I guess, pre-adjusting the meter temperature to the pool temperature would give the most accurate results, but that seems a bit excessive. But it could make sense to do that from time to time (maybe at a time of the year when pool and room temperature are very similar, as is currently the case in my unheated pool in early autumn) to verify that there are no additional drifts that would indicate that it's time to replace the sensor-head. But that can also be checked during the regular sensor calibration, where buffer-solution and pH-meter usually have the same temperature.